This table contains one column of strings named “value”, and each line in the streaming text data becomes a row in the table. This lines DataFrame represents an unbounded table containing the streaming text data. split ( " " )) // Generate running word count val wordCounts = words. load () // Split the lines into words val words = lines. Create DataFrame representing the stream of input lines from connection to localhost:9999 val lines = spark. First, we have to import the necessary classes and create a local SparkSession, the starting point of all functionalities related to Spark. In any case, let’s walk through the example step-by-step and understand how it works. You can see the full code inĪnd if you download Spark, you can directly run the example. Let’s see how you can express this using Structured Streaming. Let’s say you want to maintain a running word count of text data received from a data server listening on a TCP socket. First, let’s start with a simple example of a Structured Streaming query - a streaming word count. We are going to explain the concepts mostly using the default micro-batch processing model, and then later discuss Continuous Processing model. In this guide, we are going to walk you through the programming model and the APIs. Without changing the Dataset/DataFrame operations in your queries, you will be able to choose the mode based on your application requirements. However, since Spark 2.3, we have introduced a new low-latency processing mode called Continuous Processing, which can achieve end-to-end latencies as low as 1 millisecond with at-least-once guarantees. Internally, by default, Structured Streaming queries are processed using a micro-batch processing engine, which processes data streams as a series of small batch jobs thereby achieving end-to-end latencies as low as 100 milliseconds and exactly-once fault-tolerance guarantees. In short, Structured Streaming provides fast, scalable, fault-tolerant, end-to-end exactly-once stream processing without the user having to reason about streaming. Finally, the system ensures end-to-end exactly-once fault-tolerance guarantees through checkpointing and Write-Ahead Logs.

The computation is executed on the same optimized Spark SQL engine. You can use the Dataset/DataFrame API in Scala, Java, Python or R to express streaming aggregations, event-time windows, stream-to-batch joins, etc. The Spark SQL engine will take care of running it incrementally and continuously and updating the final result as streaming data continues to arrive.

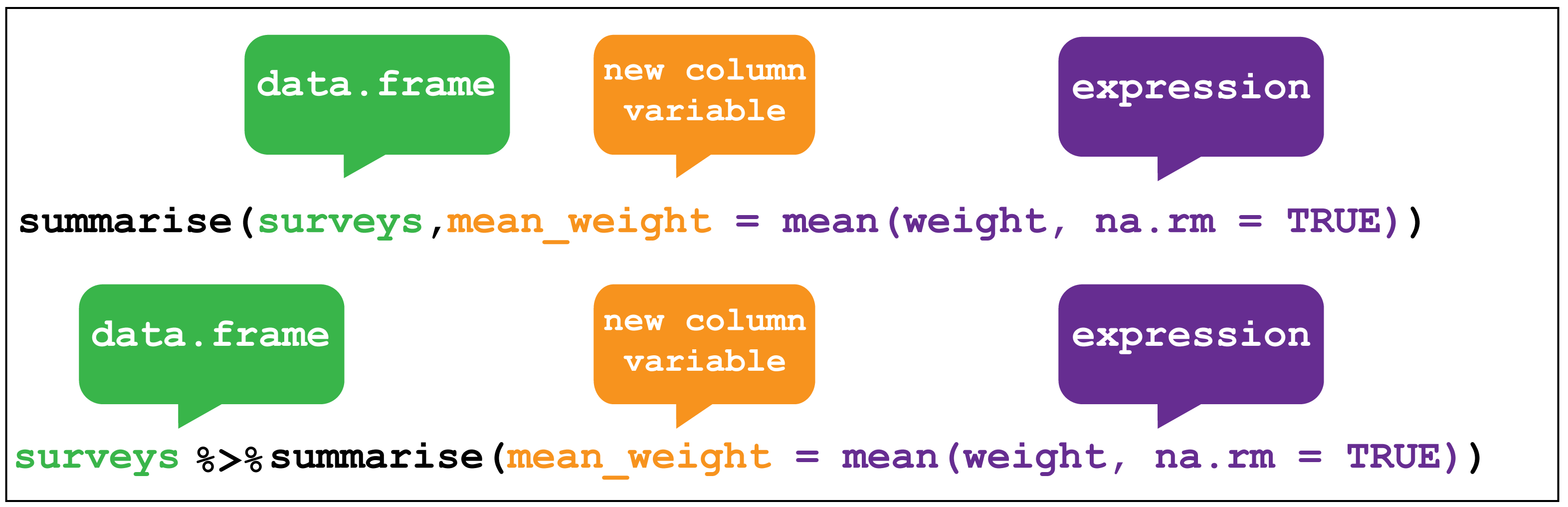

You can express your streaming computation the same way you would express a batch computation on static data. Structured Streaming is a scalable and fault-tolerant stream processing engine built on the Spark SQL engine. Recovery Semantics after Changes in a Streaming Query.Recovering from Failures with Checkpointing.Reporting Metrics programmatically using Asynchronous APIs.Policy for handling multiple watermarks.Support matrix for joins in streaming queries.Representation of the time for time window.Basic Operations - Selection, Projection, Aggregation.Operations on streaming DataFrames/Datasets.Schema inference and partition of streaming DataFrames/Datasets.Creating streaming DataFrames and streaming Datasets.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed